All sorts of changes are afoot at FlySorter.

First, the physical one — I’ve moved back to Seattle after 15 months or so on the East Coast. Boston was a wonderful environment for the company, with a host of academic labs as well as a great workspace in Industry Lab. I made some excellent connections there, and started a collaboration with the de Bivort Lab at Harvard. FlySorter is developing automation techniques to help them do bigger experiments faster, and with less work, and we’re excited about sharing the results soon. But a host of other forces drew me back to Seattle, and so here I am (happily, I should note).

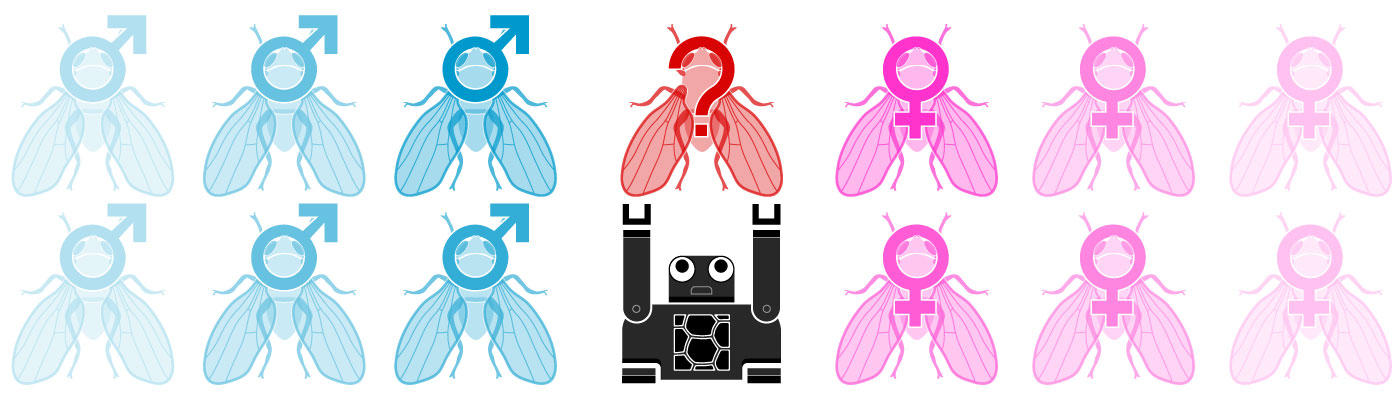

Secondly, the technological one — we are radically shifting our approach to sorting flies. As you can see in the video on our technology page, the robotic system we developed images anesthetized flies on a fly pad from above. While researchers essentially do this themselves when sorting (using a microscope, of course), they have several advantages over a computer vision-based system. Namely, they have excellent fine motor control to manipulate the delicate flies (usually with a paint brush) to reveal exactly the right part(s) of the fly. In addition, they have powerful computers (ok, brains) that have no trouble processing visual information and discerning which parts of a fly are which.

I’m proud of the work that Matt and I have done to date. Our two-step classification software works remarkably well, considering we don’t get to choose the orientation of the flies (we’re more or less stuck looking at them however they fall on the pad). We’ve demonstrated 97% accuracy sorting by sex with this technique, and we presented our work in poster form at the recent Drosophila Research Conference in Chicago.

We also realize we’ve come up against the limits of that approach. While micro-manipulation might be able to help us see a more complete fly, it would add to the cost and complexity of every machine, and also slow things down. So we began thinking about alternatives.

We started discussing ways to image flies that were awake and walking around, and landed on sandwiching flies between two pieces of plexiglass (which has been done before for other purposes). Around the same time, a group in Switzerland published this paper documenting their efforts to automate sorting based on morphometric traits. They, in fact, built a sorter, although their throughput is on the slower end — they quote it as about one fly every 40 seconds, and also note that they don’t actually image every fly in a vial, since they rely on the flies to walk themselves up into their apparatus. The Swiss group has made their imaging software available via the GPL open source license, a huge gift to the fly community.

FlySorter has made some big steps towards dramatically increasing the throughput of this kind of system. We are building a fly dispenser to pass in single awake flies from a standard vial, and preliminary testing shows a throughput closer to 20 flies a minute. Much will depend on the speed of the classification software, but ultimately, we believe we are on track to build an affordable bench-top sorter, with the capability to collect a huge amount of data on every fly in a vial.

I’m not quite ready to share our solution, but it should be proven out in the next couple of months. Stay tuned for more details!